The Microsoft 365 DLP Spectrum: A 4-Step Strategy for AI Data Security

February 20, 2026

The "Oversharing" Crisis: Copilot as a Professional Truth Serum

The deployment of Large Language Models (LLMs) and Large Reasoning Models (LRMs), such as Microsoft Copilot, has acted as a professional “truth serum” for corporate and legal data environments. To prevent the AI from weaponizing legacy permission flaws, organizations must integrate robust Data Loss Prevention protocols into their AI Data Security strategy.

For years, organizations relied on “security by obscurity”—a fragile hope that sensitive information buried in complex hierarchies or legacy “public” folders would remain unvisited. Generative AI has rendered this approach functionally extinct. Because tools like the Microsoft Graph index data based on technical permissions rather than departmental expectations, the AI will retrieve and serve any data it can technically “see,” including files shared globally years prior and subsequently forgotten.

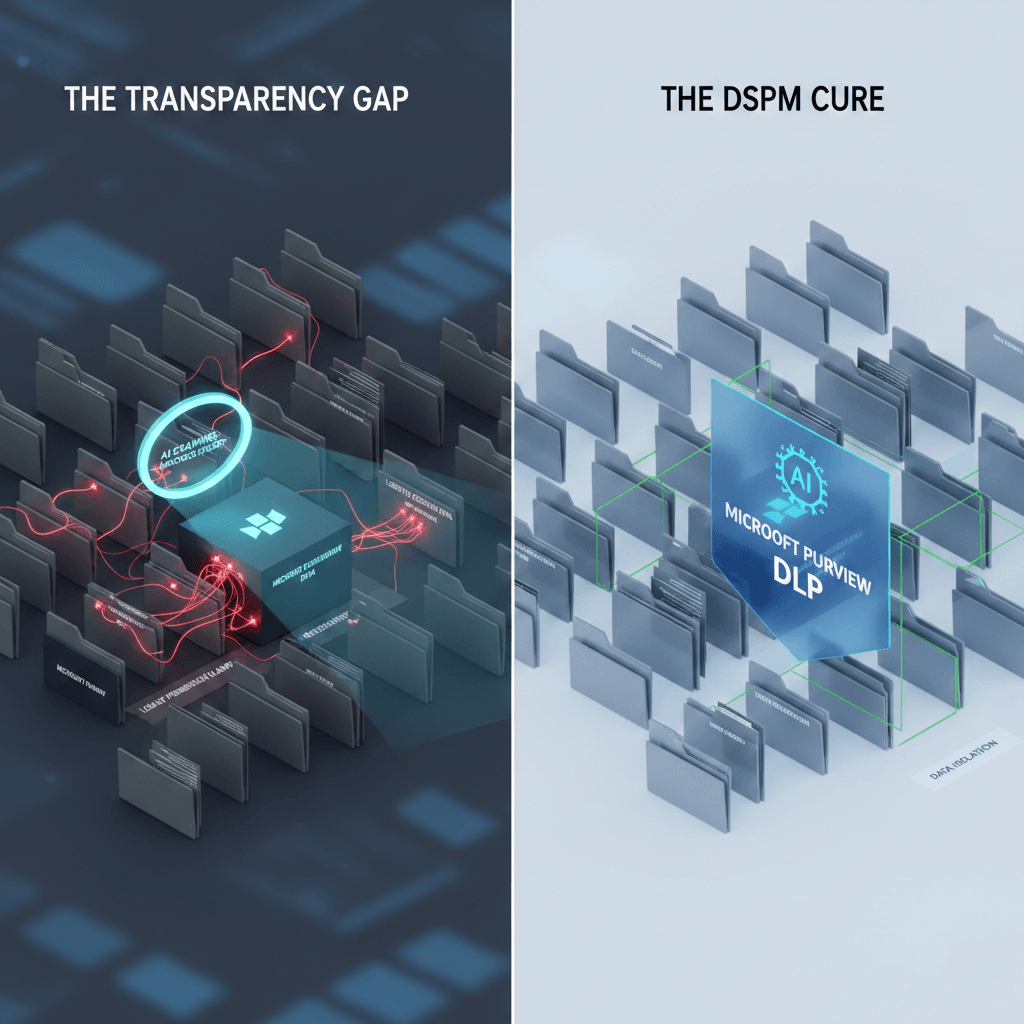

As an Information Architect, one must recognize the “transparency gap”: a profound disconnect between in-house legal expectations for data handling and the reality of technical access. This crisis is exacerbated by the “re-identifiability” risk. The Bressler Risk Blog highlights that anonymization is a moving target; even when direct identifiers are stripped, fact patterns can function as identifiers. This risk is highest when the dataset is small or unique, or when underlying disputes are public. If the “strategic infrastructure” of your data is poisoned by these exposures, the AI effectively weaponizes your legacy permission flaws.

Tackling "ROT" and the Calcification Problem

Effective AI Data Security requires the immediate remediation of ROT. By using Microsoft Purview to identify and prune redundant data, firms can prevent ‘hallucinations of omission’ within the Microsoft 365 Copilot. Within the Hallucination Framework (arXiv), ROT manifests as two primary failure modes:

- Knowledge Gaps and Outdated Information (Section 4.2.1): When an LRM relies on stale data (e.g., a 2023 training cutoff to answer questions about 2025 events), it produces “hallucinations of omission,” failing to incorporate current regulatory or financial realities.

- Noisy, Biased, or Spurious Correlations (Section 4.2.2): Over-reliance on “stochastic parrots” trained on web-scale data or noisy internal archives leads the model to learn incorrect associations—such as linking a former executive to a current corporate entity.

This triggers the Calcification Problem. Due to the autoregressive nature of next-token prediction, models prioritize local fluency over global factual consistency. They “compulsively complete patterns” based on outdated or repetitive data. When roughly 80% of typical agreements rely on standardized templates, as noted by Evangelize Consulting, a model trained on toxic “trash” data will simply calcify these suboptimal patterns, serving them as fluent, yet dangerously incorrect, legal work product.

The Cost of Trash Data

- Efficiency Drain: 40% to 60% of document review time is currently squandered on boilerplate or repetitive data that adds no strategic value.

- Contextual Override: In Retrieval-Augmented Generation (RAG) systems, noisy or irrelevant data can force a “contextual override,” where the AI prioritizes toxic immediate context over its broader internal knowledge, leading to inconsistent logic.

- Extrinsic Hallucinations: Using outdated archives (the “2023 cutoff” trap) ensures the model fabricates “facts” about the present based on the shadows of the past.

The Toolset: SharePoint Advanced Management (SAM) & DSPM as Filters

To move from a toxic environment to a trustworthy one, organizations must deploy Microsoft Purview alongside DSPM and SAM. This combination serves as a high-fidelity Data Loss Prevention filter by leveraging Microsoft Purview, ensuring that sensitive information remains isolated within the Microsoft 365 Copilot reasoning pipeline. These tools go beyond simple deletion; they implement the “technical controls” necessary to prevent cross-matter data blending and ensure data-isolation.

Architecturally, this requires implementing segmented instances and region-locked environments. Furthermore, the governance layer must shift from reactive flagging to “compliance upfront.” Following Elevate’s strategic priorities, AI-enabled spend and billing tools should enforce Outside Counsel Guidelines (OCGs) at the point of intake. By embedding risk management throughout the Enterprise Legal Management (ELM) system, you ensure that only verified, compliant data enters the reasoning pipeline.

The Governance Filter: From Toxic to Trustworthy

| Data Risk Type | Technical Root Cause (arXiv) | DSPM/SAM Remediation (Bressler/Elevate) |

| Knowledge Gaps | Outdated info (2023 cutoff vs. 2025 reality) | Region-locked environments: Ensure RAG pipelines only access “live” jurisdictional data. |

| Confidentiality Breach | Autoregressive prioritization of local fluency | Segmented Instances: Technical tenant-level isolation and strict audit logs. |

| Calcification | Compulsive pattern completion on noisy data | Data-Isolation Controls: Use SOC 2 Type II and architecture diagrams to exclude toxic sets. |

| Toxic Spend Data | Spurious correlations in billing history | Compliance Upfront: Automated OCG enforcement and explanation of AI-generated flags. |

The Lifecycle Management Strategy: Archiving "Dead Matters"

A mature AI Data Security strategy treats the LRM as a formal service. Utilizing Microsoft Purview to automate the archiving of ‘dead matters’ ensures that your Data Loss Prevention rules are enforced even as the firm’s data footprint grows. To maintain the integrity of the Service Value System, organizations must perform aggressive “AI Model Lifecycle Management.”

“Dead matters”—cases closed years ago—must be systematically archived and removed from the AI’s active reasoning chain. If left “live,” these files become “incidents” or “exceptions” that confuse the model’s reasoning trajectory. Grounded in the Hallucination Framework’s “External Knowledge Grounding” (Section 3.1), the AI should only maintain access to “live,” verified databases and APIs. By excluding the “dead” history of the firm from active indexing, you ensure the AI remains focused on current, high-value work product rather than the toxic remnants of obsolete matters.

Clean Data Equals Smart AI

The fundamental axiom of the legal AI era is: Better data inputs = Better AI outputs. Organizations that fail to remediate their data toxicities—or neglect to update their Data Loss Prevention policies for the age of Microsoft 365 Copilot—will be left managing an AI that is confidently incorrect.

Strategic Checklist for AI Governance

- Clarify Ownership: Expressly define who owns the “strategic infrastructure” of legal data and who possesses the authority to use it for model fine-tuning.

- Implement Guardrails: Verify data-isolation through environment architecture diagrams and SOC 2 Type II documentation to ensure no cross-matter data blending occurs.

- Audit Regularly: Establish Benefit Realization Plans to ensure the AI remains grounded in current work product. Use dashboards to monitor for “contextual override” and ensure the LRM service is delivering measurable value without succumbing to calcified ROT.

Turn your data liability into strategic infrastructure.

Ransomware defense and AI readiness are the same project. If you’re ready to fix data sprawl and secure your firm’s future, let’s build your roadmap together. Connect with a Cocha Strategist by filling out the form below to begin your “Crawl, Walk, Run” journey.

Recent Posts

Have Any Question?

Call or email Cocha. We can help with your cybersecurity needs!

- (281) 607-0616

- info@cochatechnology.com